aubio

Playing with Shazam fingerprints

It's already 6 years back that Avery Wang published his paper about his Shazam fingerprinting algorithm: An Industrial-Strength Audio Search Algorithm", in Proc. 2003 ISMIR International Symposium on Music Information Retrieval, Baltimore, MD, Oct. 2003.

A few weeks ago, I took some time to read the paper and implement the algorithm. It took less than 100 lines of python, and the results are quite impressive. Unfortunately, after asking Avery Wang, I did not get the permission from the patent holders to publish these 100 lines of code. Sadness! Still, many thanks to Avery for his help with getting in touch with them.

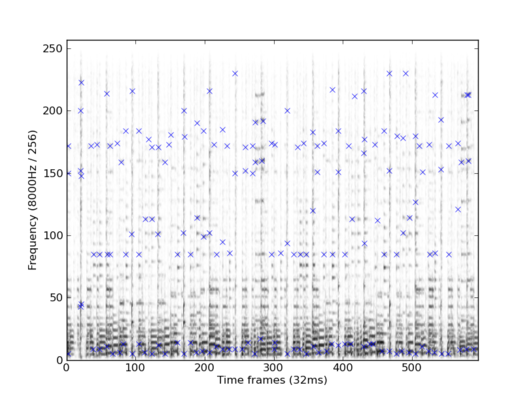

Although I can't publish this implementation, I prepared a few plots to show were it got me. Below is an example of the landmarks extracted from a 20 seconds sound sample.

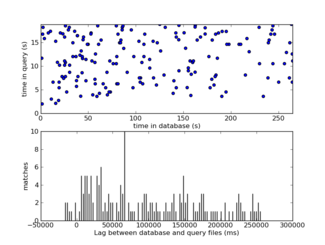

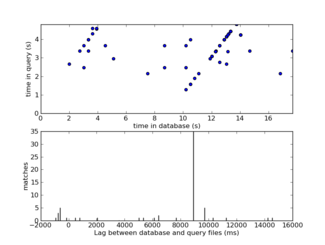

And some examples of search results, with similar plots as the ones in Avery's paper: scatter plot of matching landmarks (top) and histogram of time differences (bottom). The first image shows two signal that do not match, the second one shows a match.

On my laptop, it takes a little less than 8 seconds per minute of music to extract the fingerprints from an MP3 file. Not too bad for a python script, and quite promising for the newly rewritten python aubio module. The search algorithm I wrote works in O(N), with N the total number of fingerprints in the database. There is much space for improvements. For instance storing the fingerprints in a dictionnary instead of a flat list would probably speed it up to O(log(N)).

To test the robustness of my simple implementation, I tried adding noise, echo, and mixing with a high noise level, but the algorithm remains quite robust to this type of signal deterioration. Mixing two music signal does become problematic though.

While writing this code, I didn't know about Dan Ellis' implementation for Matlab. I also found more articles since, How Shazam Works and Shazam: not magic after all, as well as in Slate Magazine. In the paper Fingerprinting to Identify Repeated Sound Events in Long-Duration Personal Audio Recordings, in Proc. ICASSP-07 Hawaiʻi, pp.I-233-236 (2007), James Ogle and Dan Ellis use the algorithm to look for structural similarity within a recording (see also the poster). A very nice idea. Looking at the other patents submitted by Avery Wang, he also thought about such applications.

Looking for two similar songs was one of the thing I tried to test my implementation. The above plot, on the left, represents the number of matching fingerprints against the position in the query and was obtained using two versions of the same song: the famous disco version of What a Diff'rence a Day Makes, by Esther Phillips, published in 1975 on the excellent Kudu/CTI label. The first version, the second track on the eponymous album, is 4:31 minutes long. The second version, track number 9, is 3:11 minutes long.

By now you should wonder: why two large peaks and not just one? The color map, on the right, shows the 2D histogram of the fingerprints matching exactly in the song. The difference is now clear: in the short version, the second minute (a saxophone solo) has been cut out of the long version. Other patterns seem to appear outside of the diagonal, but not clearly enough to be interpreted ‒ the search algorithm would need to be modified to allow fuzzy matching, in a similar way as in Ogle and Ellis paper.

Avery's article mentioned how he could pick up lip syncing. There are many more applications to be considered: comparing two live performances, extracting automatically the playlist from a movie or a DJ performance, analysing the structure of a song, scrobbling vinyl records...

Unfortunately, it seems like I will have to scrobble my vinyl records alone for now, and wait patiently for these patents to expire to do that openly.

Wed, 11 Nov 2009, 23:39. trackback - view/add comments

Responses to this post

@ken: As I mentioned in the post, I told Avery I would not distribute the code. The algorithm is fairly simple though, and I'd be happy to answer your questions if you miss a few steps.

Posted by piem at Thu Dec 3 16:08:03 2009

Is it the patent exactly? http://www.boliven.com/patent/US7516074 We will be a little older :)

Posted by Marko at Thu Dec 17 11:37:51 2009

very bad this patent thing. And what about applying the idea to different fields (i.e. other time series, not music?) and/or slightly modifying the algorithm? how far does the patent protection go?

Posted by Roberto at Thu Jan 7 11:56:39 2010

I have never seen a toy implementation of a patented algorithm being actively refused distribution before.

To be honest I wouldn't have even thought to bother to ask Avery and just released it. Jeez I have knocked up plenty of toy implementations of algorithms over the years and never check to see if they are patented.

It is quite a joke.

Posted by Ikkath at Mon Jun 14 04:42:25 2010

Would you share your python code with me? nacho4d@gmail.com I am interested in a sound project. Thanks

Posted by Ignacio at Mon Jul 12 02:48:13 2010

Nice!

can you at least send the code directly over e-mail? Thanks.

Posted by ad at Wed Jan 12 16:56:20 2011

I am also interested in the 100 lines of code, if you could send it via email that would be great!!

Posted by mike at Wed Feb 9 00:08:38 2011

very interesting.

could u send me the codes, it's very useful for the algorithm investigation.

Posted by paul at Sat Mar 19 07:40:58 2011

I've tried Dan Ellis' Matlab implementation of the algorithm and it works amazingly well. However, it is quite complicated to understand every part of it. I'm not really familiar with Matlab anyway. 100 lines of python sounds much easier to understand. Could you send me the code (for educational purposes only)?

Posted by clav at Thu Mar 24 22:50:26 2011

I've been trying to do this myself in python but I don't quite understand how the landmarks are calculated. I have the read in a wav file done an FFT on a the chunks and found the loudest freq for each chunk.. any pointers would be greatfully received!

Posted by Ad at Tue Mar 29 17:45:19 2011

I am interested in your 100 lines of Python code, would really appreciate if you could send it via email (rabihma at gmail.com )

Posted by Rabih Maalouf at Thu Jul 28 10:45:28 2011

hi, may i know your email address ?

i want to ask you some question regarding to this sound recognition.

just send me an email if you dont mind :)

Posted by lmiisahuman at Fri May 11 11:49:51 2012

Hi, i have a project for Measuring Radio Station exposure to a designated group of people (about 900 peoples). Would be interested to employ your Sound compare/search code to match the sound file recorded by them throughout each day.

Will you be interested to participate and assist us in this project?

Hope for your favorable reply.

thanks.

Posted by Kison Ghan at Wed May 30 19:11:52 2012

Hi,

I would also be also very happy to get these few lines of code, I am actually comparing different fingerprinting algorithms.

Thanks a lot

lila

Posted by lila at Wed Jun 27 14:10:42 2012

Hello,

I am working on a school project on music searching, so would you please let me take a look at the 100 line python code?

email: ooooxxxx2003@hotmail.com

Thank you very much!

Posted by Tim at Sun Nov 4 10:36:45 2012

Hello,

I am working on a school project on music searching, so would you please let me take a look at the 100 line python code?

email: ooooxxxx2003@hotmail.com

Thank you very much!

Posted by Tim at Sun Nov 4 10:36:48 2012

Hello Sir, I have read your post regarding audio fingerprinting matching(Shazam) and we are trying to implement the same. But the problem is that we are getting close match but not exact match with the points like (1,71,86,172,259) and (1,71,86,173,259).. Do you have any idea what could possibly cause this? Can it happen due to some microphn noise. Our implementation is exactly same as yours . I would appriciate if you could give me some advice regarding this.

Thank you in advance.

Posted by AD at Sat Dec 8 12:22:24 2012

Hi,

I tried implementing this in Java, but look like getting these key points and matching mechanism doesn't work so well. Can you please send me Pythin code to analyse it? Thanks id advance.

Posted by Jovan at Thu Dec 20 17:56:10 2012

Hi,

I am trying to play with Shazam algorithm (Java) in my spare times. I am treating spectral peaks in each frame as landmarks but this does not seem to work :-( I would really appreciate your help if you guide me on how to find those ROBUST LANDMARKS.

Thank you in advance.

Yasmin Kha

Posted by Yasmin Kha at Fri Dec 28 11:17:44 2012

Nice job%uFF01

I have also tried Dan Ellis' Matlab implementation of the algorithm%uFF0Cit works well%uFF0Cbut it contains a lot files%uFF0Cthat is some complex.

And now i am doing a research just like shazam do(school research and non-commercial). I want to have a test by using it, would you like to send the code to me?(I won't send it to anyone)

Thank you for your attention to this matter!

A Student from UESTC of china.

Just thanks again!

Posted by way at Thu Mar 7 14:40:09 2013

Nice job!

I have also tried Dan Ellis' Matlab implementation of the algorithm%uFF0Cit works well%uFF0Cbut it contains a lot files%uFF0Cthat is some complex.

And now i am doing a research just like shazam do(school research and non-commercial). I want to have a test by using it, would you like to send the code to me?

Thank you for your attention to this matter!

A Student from UESTC of china. PS Email:whu.way@gmail.com

Posted by way at Thu Mar 7 14:41:41 2013

Hey there! I would like the 100 lines of code for a project please. I would really appreciate it. Thanks!!

Posted by nvittal at Wed Mar 27 01:09:46 2013

Well done man !

I work on a similar project with a new approach. Feel free to contact me.

jeremievauclin@yahoo.fr

Regards

Posted by Vauclin at Sun Apr 7 18:13:33 2013

My research is this topic for school.can you send the code to me?

Posted by nahid at Fri Nov 1 17:59:47 2013

Hiii,

Thanks for nice article.I want to ask one thing.

Is it necessary to convert stereo song to mono(while fingerprint creation) as user input will be mono.

plz reply

Thanks

Posted by Him at Fri Nov 1 18:06:59 2013

Hi,

Thanks for this nice article. Actually I've implemented whole algorithm, I'm stuck at the end of the algorithm that "How to know which song is matched after processing" ?

I've got

Posted by Arsalan at Sun Dec 8 14:45:52 2013

Hi,

I tried to implement this algorithm in Java, but it's quite difficut;;

Can you please send me Python or java code to analyse it?

plz, help.. me..

Posted by yun at Mon Jan 27 05:41:34 2014

Weird. The author says early on that he can't distribute his source code, yet people keep asking for the source code. If you can't understand plain English, how would you understand the code even if you had it?

Posted by Hank G. at Wed Apr 9 20:53:47 2014

Thank you for your article.Really thank you! Really Great.

Posted by GraserOi at Mon Nov 24 06:07:28 2014

Hi

Can I get the full source code you implemented?

It would be very helpful for me to further study.

Thank you.

Posted by aaron at Mon Dec 29 08:54:09 2014

I'd like a copy of your source code pls: evandrix@gmail.com

Posted by evandrix at Sun Aug 2 18:29:39 2015

Hello!

I'm trying to learn how to use aubio to do a similar project for a class. However, I don't understand how you generated the graphs. Can you explain that?

Additionally, can you possibly show how you split up the sound that you inputted in the wav file 3 times per second during real time?

Thanks,

Shivani

Posted by Shivani at Wed Dec 2 01:50:54 2015

Leave your own comment

© 2003-2017 the aubio team | cc-by-sa